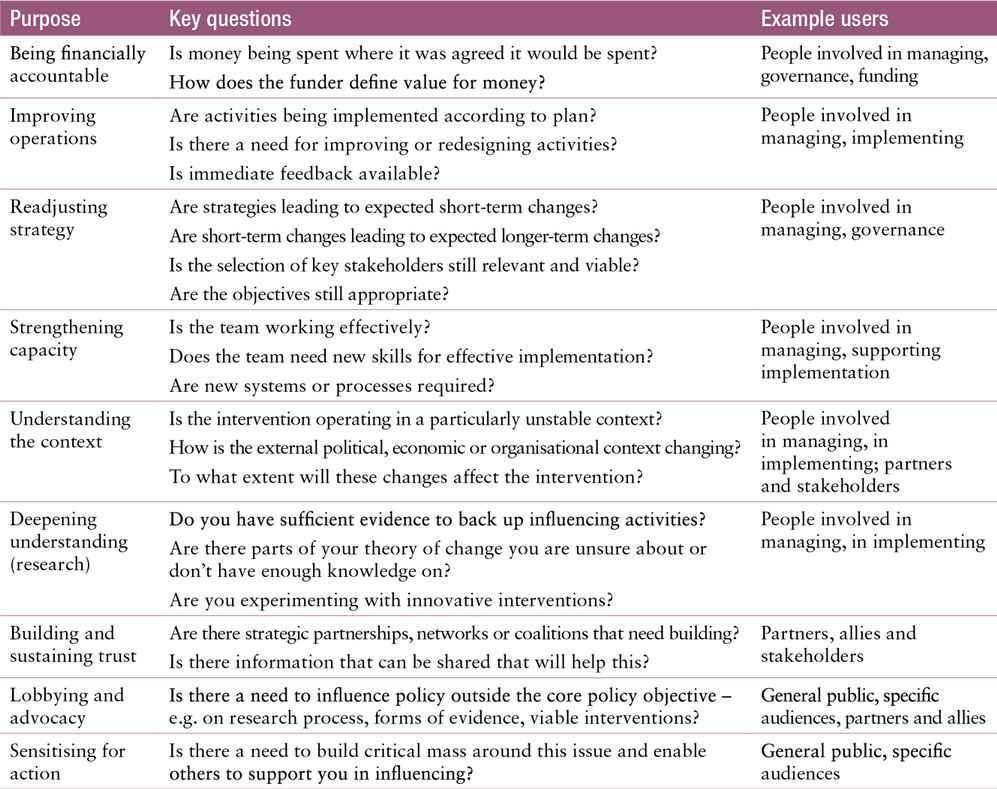

Broadly, the purposes behind M&E are usually viewed in terms of learning (to improve what we are doing) and accountability (to prove to different stakeholders that what we are doing is valuable). But we need to be more specific. Below is a list of nine purposes that summarises different motivations and uses of M&E. Each will involve different elements of learning and accountability in a way that recognises the importance and interconnectedness of both rather than setting them in competition with each other.

The first five purposes pertain to managing the intervention; the last four could be part of the intervention itself as strategies that directly contribute to the overall goal.

List of nine learning purposes

- Being financially accountable: proving the implementation of agreed plans and production of outputs within pre-set tolerance limits (e.g. recording which influencing activities/outputs have been funded with what effect).

- Improving operations: adjusting activities and outputs to achieve more and make better use of resources (e.g. asking for feedback from audiences/targets/partners/experts).

- Readjusting strategy: questioning assumptions and theories of change (e.g. tracking effects of workshops to test effectiveness for influencing change of behaviour).

- Strengthening capacity: improving performance of individuals and organisations (e.g. peer review of team members to assess whether there is a sufficient mix of skills).

- Understanding the context: sending changes in policy, politics, environment, economics, technology and society related to implementation (e.g. gauging policy-maker interest in an issue or ability to act on evidence).

- Deepening understanding (research): increasing knowledge on any innovative, experimental or uncertain topics pertaining to the intervention, the audience, the policy areas etc. (e.g. testing a new format for policy briefs to see if they improve ability to challenge beliefs of readers).

- Building and sustaining trust: sharing information for increased transparency and participation (e.g. sharing data as a way of building a coalition and involving others).

- Lobbying and advocacy: using programme results to influence the broader system(e.g. challenging narrow definitions of credible evidence).

- Sensiting for action: building a critical mass of support for a concern/experience (e.g. sharing results to enable the people who are affected to take action for change).

There are two very practical reasons for considering these learning and accountability purposes.

First, making the purposes explicit directly links monitoring to the programme objectives and makes it clear to everyone involved why monitoring is important.

This may be about informing stakeholders what is being done and what the effect is in order to sustain support for the intervention, or it could be to improve the ability of the team to effect the desired change. If this link is not clear then motivation for monitoring will likely decrease and it will be difficult to maintain participation and quality.

Second, each purpose will have different information requirements, different times and frequencies at which information is needed, different levels of analysis, different spaces where analysis takes place and information is communicated and different people involved in using the information.

Clarifying the learning and accountability priorities can help thread these elements together to form a monitoring system embedded in existing organisational practices.

Table 8 presents a set of questions that can help decide the priority learning and accountability purposes. It also suggests possible users of the monitoring information gathered for each purpose. This helps when thinking through who needs to be engaged and what specifically they will need.

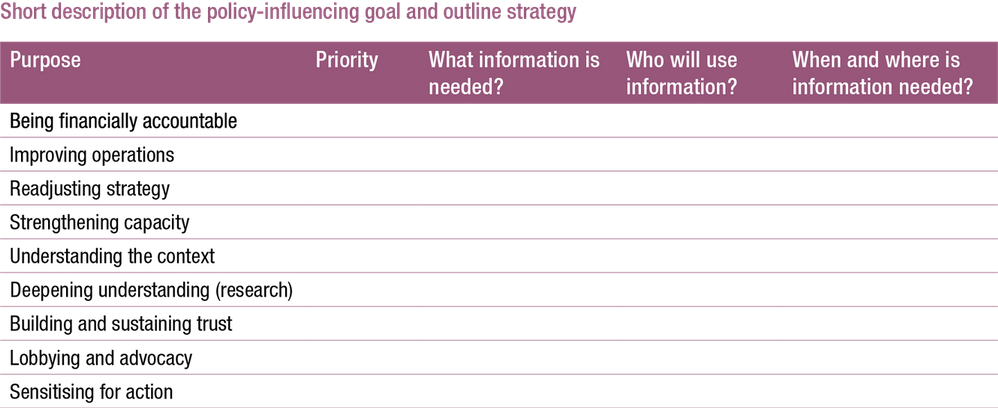

Table 9 is a tool you can use for planning your monitoring by indicating the priority purpose(s) and describing where and when information is needed and who needs to be involved. The next step is to decide what information is required; this is described in the next section.

Each of the purposes listed above will require different types of information about the intervention and its environment.

For example, ‘financial accountability’ will require accurate information about the quality and quantity of what has been done and the resources used; ‘understanding the context’ will entail knowing about the people in charge of the policy area and their incentives; and ‘strengthening capacity’ will need information about performance of team members and partners, and the competencies required for the intervention.

Monitoring for strategy and management

As well as monitoring for learning and accountability, monitoring also helps you ensure you remain headed in the right direction. There are six levels at which you can monitor:

- Strategy and direction (are you doing the right thing?)

- Management and governance (are you implementing the plan accurately and efficiently?)

- Outputs (do the outputs meet required standards and appropriateness for the audience?)

- Uptake (are people aware of, accessing and sharing your work?)

- Outcomes and impact (what kind of effect or change did the work contribute to?)

- Context (how does the changing political, economic and organisational climate affect your plans?)

For each of these levels, there are different measures you could consider monitoring. The full range of measures is presented below: for each of the levels, the measures are presented in a rough order of priority. You will probably already be working with many of them, but it is useful to go through the list below to see whether there are others you may need to consider.

Strategy and direction

For many people working to influence policy, the choice of interventions will depend on the theory of change. Many start by making their theory of change explicit. This not only helps ensure a sound strategy in the first place, but also enables regular review and refinement at a strategic level. Practically, it helps identify key areas for monitoring and baseline data collection. Regardless of how a theory of change is presented, it can be assessed by questioning the following features:

- How the theory describes the long-term change that is the overall goal of the intervention: is the desired long-term change still relevant?

2. How the theory addresses context: is the strategy still appropriate for the context? Has the context changed signficantly? Does the strategy need to change?

- The assumptions about how change may occur at any point in the theory, and about the external factors that may affect whether the interventions have the desired effects: are the assumptions about policy change holding true? Has anything unexpected happened?

- How the theory assesses the different mechanisms that could affect long-term change: is the assessment of the mechanisms affecting policy change still valid?

- What interventions are being used to bring about long-term change? Is there the right overall mix of interventions? Are the interventions having the desired effect, demonstrating movement in the right direction?

Management

Management monitoring can be simplified down to recording what is being done, by whom, with whom, when and where. A systematic record of engagement activities can help make sense of the pathways of change later on.

Management monitoring can also involve assessing whether the most appropriate systems are in place, the best mix of people with the right set of skills are involved and the intervention is structured in the most effective way. This is particularly important when strategic policy-influencing introduces new ways of working for an organisation. Included in this is the regular assessment of the monitoring and decision-making processes themselves.

- Management and governance processes: how do organisational incentives help/hinder policy-influencing? Is learning from the team leading to improved interventions? Is the team working in a coordinated, joined-up way?

- Implemented activities: what has been done? When and where was it done? Who was involved?

- The mix of skills within the team: given the strategy, what capacity/expertise needs to be developed or bought in?

- Capacity of performance of individual team members: how are team members, contractors and partners performing at given tasks? What difference has training/capacity-building made?

Outputs

Outputs are the products of the influencing intervention and communication activities. Policy briefs, blogs, Twitter, events, media, breakfast meetings, networks, mailing lists, conferences and workshops are all potential outputs.

It is not enough to just count outputs: quality, relevance, credibility and accessibility are all key criteria that need to be considered.

- Quality: are the project’s outputs of the highest possible quality, based on the best available knowledge?

- Relevance: are the outputs presented so they are well situated in the current context? Do they show they understand what the real issue is that policy-makers face? Is the appropriate language used?

- Credibility: are the sources trusted? Were appropriate methods used? Has the internal/external validity been discussed?

- Accessibility: are they designed and structured in a way that enhances the main messages and makes them easier to digest? Can target audiences access the outputs easily and engage with them? To whom have outputs been sent, when and through which channels?

- Quantity: how many different kinds of outputs have been produced?

Uptake

Uptake is what happens after delivering outputs or making them available. How are outputs picked up and used? How do target groups respond? The search for where your work is mentioned must include more than academic journals – for example newspapers, broadcast media, training manuals, international standards and operational guidelines, government policy and programme documents, websites, blogs and social media.

Other aspects to consider include the amount of attention given to messages; the size and prominence of the relevant article (or channel and time of day of broadcast); the tone used; and the likely audience.

Secondary distribution of outputs is also important. The most effective channels may be influential individuals who are recommending the work to colleagues or repeating messages through other channels: it is important to capture who they are engaging with and what they are saying. Finally, direct feedback and testimonials from the uses of your work should be considered.

The following are the results areas for uptake:

1. Reaction of influential people and target audiences: what kind of feedback and testimonials are you hearing from influential people? How are they responding to your work?

2. Primary reach: who is attending events, subscribing to newsletters, requesting advice or information?

3. Secondary reach: who are the primary audiences sharing your work with and how? What are they saying about it?

4. Media coverage: when? Which publication(s)/channel(s)/programmes(s)? How many column inches/minutes of coverage? Was it positive coverage? Who is the likely audience and how large is it?

5. Citations and mentions: who is mentioning you and how? For what purpose: academic, policy or practice?

6. Website/social media interactions: who is interacting with you? What are they interested in?

Outcomes

Monitoring the outcomes you seek is an integral part of ROMA. You should have already set out the outcomes as part of the process of finalising your influencing objective, as outlined in Develop a strategy. Refer back to Table 2 in Realistic Outcomes for the discussion of nine possible outcomes to monitor and the different measures you can use to assess them.

Context

External context is the final area to consider for monitoring. This is important to ensure the continued relevance of the interventions chosen. By this stage, you should be abreast of the shifting politics in your field of work: the agendas and motivations of different actors, who is influencing whom and any new opportunities for getting messages across. You should also be aware of new evidence emerging, or changing uses or perceptions of existing evidence, as well as the wider system of knowledge intermediaries, brokers and coalitions to use. Diagnose the problem introduced three dimensions of complex policy contexts: distributed capacities, divergent goals and narratives and uncertain change pathways. Here are the areas to monitor for each.

When distributed capacities define the context, it is helpful to monitor:

- The decision-making spaces: when, where, how are decisions being made? How are they linked?

- The policy actors involved: who are they? What are their agendas and motivations? How much influence do they have? Who are they influencing? How are they related formally or informally?

When divergent goals and narratives define the context, it is helpful to monitor:

- Prevalent narratives: what are the dominant narratives being used to define the problem? Who is pushing them and why? What opportunities do they offer?

- Directions for change: what are the different pathways already being taken to address the problem and (how) are they aligned to others?

When uncertain change pathways define the context, it is helpful to monitor:

- Windows of opportunity: are there any unexpected events or new ideas that can be capitalised on? Is there anything that can provide ‘room for manoeuvre’?

How to use measures

These individual measures should be treated as a menu from which to choose when developing an M&E plan. Table 9 is the key table to fill in:

- List those measures you are already monitoring (ask yourself: are you monitoring them in sufficient detail?)

- Identify another three or four measures you would like to monitor.

- Use this expanded list to populate the cells in Table 9, identifying which measures will help you meet each of the nine learning and accountability purposes. For example, the quality and quantity of outputs may be used to demonstrate financial accountability (you have spent the money on the outputs you said you would produce), improving operations (you produced them in a timely fashion) and deepening understanding (they represent a significant advance in your knowledge of the issue).

- Look across the table to identify any gaps; if the gaps are significant (i.e. the story of your intervention cannot be told properly), refer back to the list of measures above to work out how to fill them in. Choose the measures that match your intervention and the desired changes they are contributing to.